-

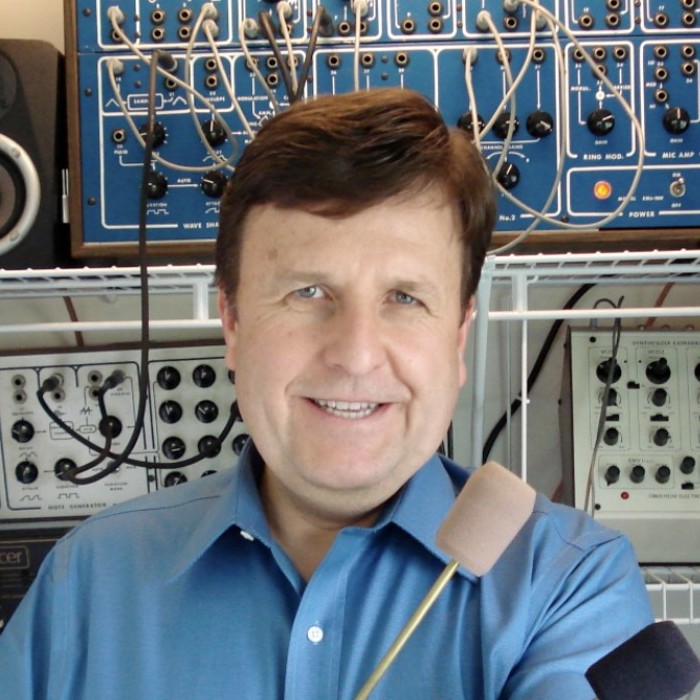

Keynote Speaker

Dr. Richard Boulanger

Dr. Richard Boulanger was born in 1956 and holds a Ph.D. in Computer Music from the University of California, San Diego where he worked at the Center for Music Experiment’s Computer Audio Research Lab. He continued his computer music research at Bell Labs, CCRMA, the MIT Media Lab, Interval Research, and IBM while working closely with Max Mathews and Barry Vercoe.

For the past 25 years, Dr. Boulanger has been teaching computer music composition, sound design, alternate controllers, and programming at the Berklee College of Music. There, he is a Professor of Electronic Production and Design and has been awarded both the Faculty of the Year Award and the President's Award. Over the course of his career he has been a driving force behind the spread of Csound, since its early days at MIT. He has taught thousands of students, lectured all over the world, and worked to bring Csound to the OLPC project.

His published work includes two seminal electronic production texts from MIT Press: The Csound Book and The Audio Programming Book.

-

Speakers

Steven Yi

Joachim Heintz

Steven Yi is a composer and programmer. He is the author of the Blue Integrated Music Environment and a core developer of Csound (http://csound.github.io/). He is currently working on a PhD in Digital Arts and Humanities at the National University of Ireland, Maynooth; he expects to finish his degree in Summer 2015. In his free time, he enjoys practicing and studying T'ai Chi.

Joachim Heintz has used Csound since 1995 and has been active in the community for the past decade. Together with Iain McCurdy and others he founded and continues to maintain the Csound textbook at flossmanuals.net. He contributed to CsoundQt, which he also uses for the electronics in his compositions. In addition to writing music he also enjoys writing texts. He is head of the electronic studio at the University of Music in Hannover, Germany, where he hosted the first Csound Conference in 2011.

-

Speakers

Bernt Isak Wærstad

Gleb Rogozinsky

Bernt Isak Wærstad is a musician, sound artist, programmer and sound designer. He is also a lecturer at NTNU and the Norwegian Music Academy where he teaches instrument design and performance utilizing digital music technology. Together with Alex Hofmann and Kim Ervik, he started the COSMO Project, a open source/hardware Raspberry Pi and Csound-based effect pedal, in 2013. Bernt Isak is also a member of the Partikkel Audio team, the developer of the free open-source Csound-based Hadron Particle Synthesizer, where his efforts have been mainly Max for Live-programming, VST/AU-programming and sound design. For his live performance setups, often using Cabbage or the csound~ external, he constantly find new ways to use the power of Csound.

Scientist, composer and programmer, Gleb Rogozinsky works with Csound for almost ten years. He developed GENwave for wavelets in Csound. He is working as a deputy head of Medialabs at the Bonch-Bruevich St.Petersburg University of Telecommunications, Russia. He also reads lectures on computer music and audio programming. His interests are modern music, sound synthesis, and artificial intelligence. Gleb holds a PhD degree in Audiovisual Processing. He is also the author of several serial compositions. Other part of his life is devoted to industrial music.